On February 5, Professor Xuejun Qian’s team from the School of Biomedical Engineering at ShanghaiTech University published a research article online in Nature Health titled “A foundation model for breast and lung cancer screening using non-contrast computed tomography.” The study presents OMAFound, the first CT foundation model with “one-scan, multi-screening” capabilities for multi-cancer screening, and innovatively introduces a dual-layer risk assessment system at both the organ level and the individual level. This provides a new technical pathway for broader multi-cancer screening.

Early cancer screening is a critical step in reducing cancer incidence and mortality. However, screening must meet population-level needs for affordable, convenient, and high-throughput coverage. Traditional screening approaches that focus on “one cancer type, one screening program” are costly in terms of time, expense, and medical resource consumption, making them difficult to apply at scale among asymptomatic populations. Therefore, exploring a cost-effective “one-scan, multi-screening” strategy for multi-cancer early detection is an important direction for improving universal health coverage. Non-contrast CT, especially low-dose CT, offers a low-cost, easily accessible imaging solution that is widely used in various medical settings, including physical examinations, outpatient visits, and inpatient care. Even in relatively resource-limited regions, it remains highly accessible, making it an ideal carrier for “one-scan, multi-screening.” However, traditional manual image interpretation is cumbersome and involves large volumes of information, while diagnostic consistency and accuracy are difficult to fully ensure. How to use artificial intelligence to achieve precise, stratified multi-cancer screening has therefore become a key challenge that urgently needs to be addressed.

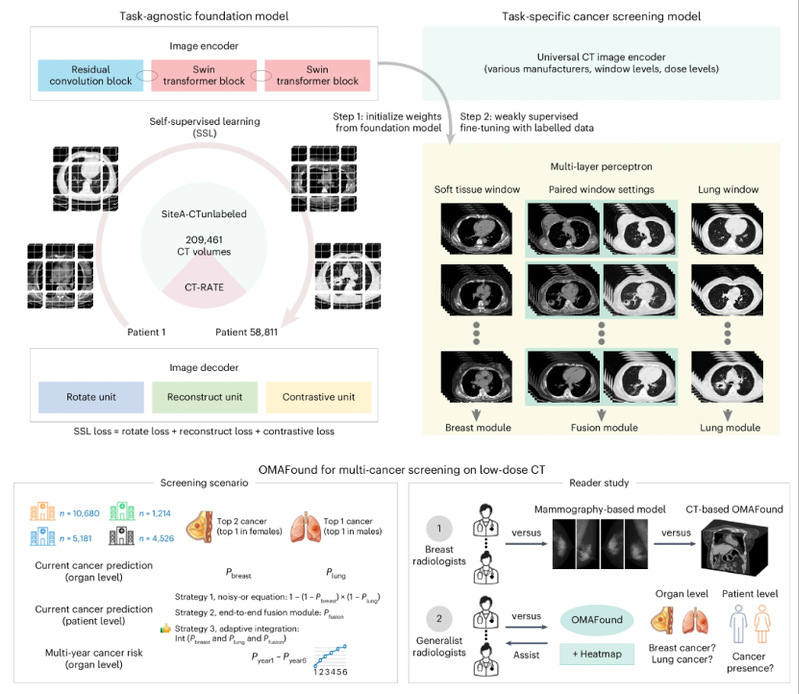

To this end, the research team developed OMAFound, a foundation model for multi-cancer screening based on non-contrast CT. The model was pretrained using more than 200,000 CT images and adopted self-supervised learning to extract general CT representations that are more robust to differences in imaging devices, dose variations, and window-level settings. It was then finely tuned using multi-window annotated data, significantly enhancing its multi-cancer screening capability. In multi-center retrospective validation, OMAFound demonstrated performance in breast cancer and lung cancer screening that was comparable to existing mainstream screening approaches. Its breast cancer screening performance approached that of mammography-based screening systems, while its lung cancer screening performance approached that of dedicated low-dose CT screening systems. In addition to organ-level assessment, the team further proposed an individual-level risk identification framework, which can comprehensively evaluate an individual’s overall cancer risk from a single CT scan. This framework can help identify high-risk populations and enable efficient triage, thereby guiding subsequent organ-specific specialist examinations and refined diagnosis in a targeted manner.

In a low-dose CT cohort covering more than 20,000 individuals, OMAFound achieved detection accuracies of 82.2% for breast cancer and 88.0% for lung cancer among women, and 86.1% for lung cancer among men. Importantly, with the assistance of OMAFound, the sensitivity of senior radiologists improved significantly: by an average of 38.9% for breast cancer, 16.0% for lung cancer, and 21.3% for individual-level assessment, while specificity was not significantly affected. This result aligns with the core goal of screening: to reduce missed diagnoses as much as possible within an acceptable range of false positives, thereby improving the detection of early-stage cases.

Another prominent feature of OMAFound is its emphasis on model interpretability. Since CT is not a routine tool for breast cancer screening, the model’s attention visualization can help physicians, especially younger doctors, focus more quickly on potential lesion areas. This supports opportunistic breast cancer screening and enhances the understandability and acceptability of clinical use.

Affordability, convenience, and high throughput are essential prerequisites for cancer screening. The OMAFound model developed in this study provides a practical technical tool for AI-enabled “one-scan, multi-screening,” and is expected to promote the real-world implementation of “early detection and early diagnosis,” with important clinical value and social significance.

ShanghaiTech University master’s student Zhiying Liang from Professor Xuejun Qian’s research group is a co-first author of the paper. Master’s student Chenglu Hu and undergraduate student Yiming Wu are co-authors. Professor Xuejun Qian is a co-corresponding author. ShanghaiTech University is the primary institution, and the Shanghai Clinical Research and Trial Center is the collaborating institution.

Paper link: https://www.nature.com/articles/s44360-026-00055-8